Telling AI to not replicate itself is like telling teenagers to just not have sex

Do humans have the capacity for safe AI? Our history shows innovation and technology advancements are replete with unintended consequences.

Do humans have the capacity for safe AI? Our history shows innovation and technology advancements are replete with unintended consequences.

Who knew that widespread social-media adoption would lead to disinformation campaigns aimed at undermining liberal democracy, when it was originally thought it would increase civic engagement? After all, AI not only enables the development of autonomous vehicles, but also autonomous weapons. Who wants to contemplate a possible future where self-aware AI becomes catatonically depressed while in possession of nuclear launch codes?

While we look forward to a future where humans use AI to enhance our existence, we need to consider what steps are being taken to get us there. In particular, we should be concerned with the fact that AI is being developed to replicate itself, potentially embedding biases into the algorithms that will underpin and drive our tomorrow—and repeating them, writ large.

The consequences of this could be dire. Because while to err is to be human, to truly foul things up requires a computer.

Learning to learn

AI will soon become capable of self-replication—of learning from and creating itself in its own image. What currently keeps AI from learning “too fast” and spiraling out of control is that it requires a vast amount of data on which to be trained. To train a deep-learning algorithm to recognize a cat with a cat-fancier’s level of expertise, you first must feed it tens or even hundreds of thousands of images of felines, capturing a huge amount of variation in size, shape, texture, lighting, and orientation. It would be much more efficient if, like a person, an algorithm could develop an idea about what makes a cat a cat from fewer examples, just as we humans don’t need to see 10,000 cats to recognize one sauntering down the street.

A Boston-based startup, Gamalon, has pioneered a technique it calls “Bayesian program synthesis” to build algorithms capable of learning from fewer examples. A probabilistic program can determine, for instance, that it’s highly probable that cats have ears, whiskers, and tails. As further examples are provided, the code behind the model is rewritten, and the probabilities tweaked. At a certain point, the AI program takes over, and models are created on their own. In other words, it is learning how to teach itself instead of us needing to teach it.

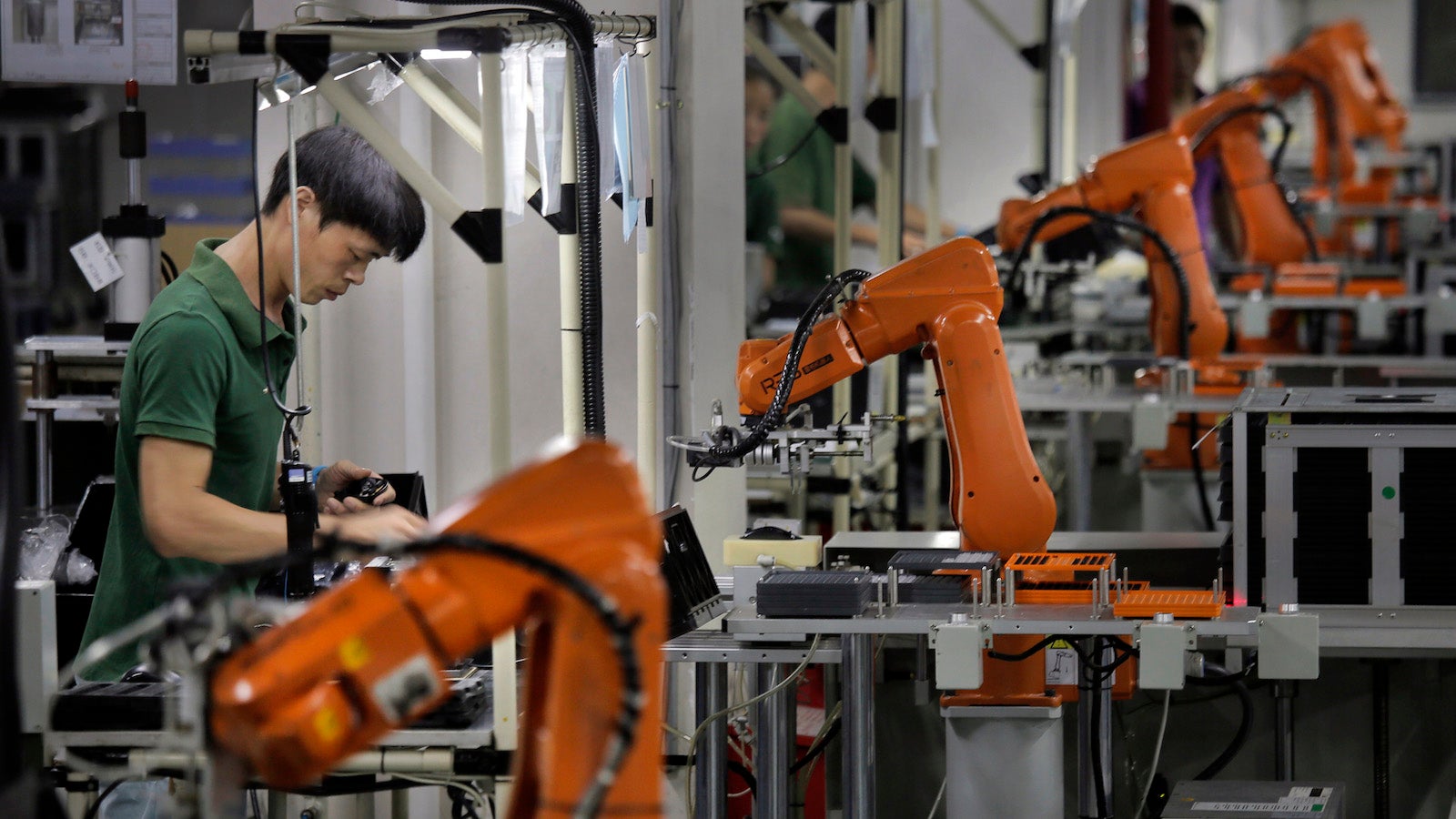

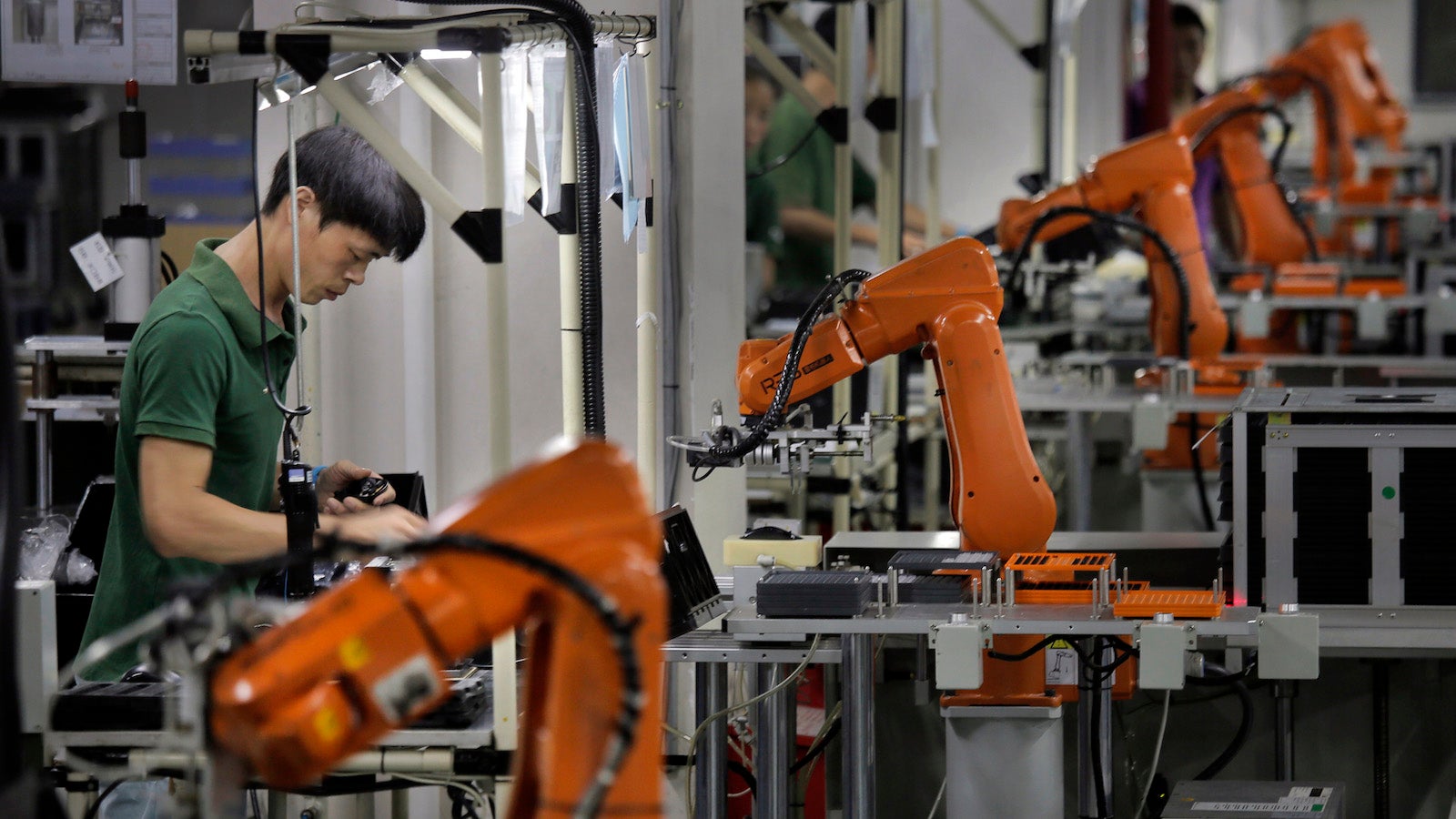

Initially, self-replicating AIs seem like a cost-effective idea. In large part this stems from the fact that there are only a small group of people who possess the education, experience, and talent necessary to create the algorithms underpinning AI. While AI is a key part of scalable new technologies, there is a human bottleneck with only 300,000 AI engineers on the planet, according to Chinese technology company Tencent. To continue at the pace we’re on, we’ll need millions. To compare, Mensa only has 134,000 members.

Genius is in short supply. If we want to see cost reductions and productivity gains in the industry any time soon, AIs that can teach themselves are a necessary force for the creation of the AI-driven economy.

Myriad AI meta-learning efforts are underway to accelerate the pace at which AI can be developed and deployed. For example, Alphabet has a Google project named AutoML. ML stands for “machine learning,” whereby computer algorithms can learn to perform various tasks on their own by analyzing data. AutoML is a machine-learning algorithm that learns to build other machine-learning algorithms. (Very meta.) And Alphabet is far from alone in this endeavor: Companies such as Baidu, Facebook, IBM, Microsoft, and others are eagerly competing to build the brave, new technology platform of AI.

Governments are also playing a role. DARPA has a program named L2M, which stands for “Lifelong Learning Machines,” to which it has allocated $65 million. Through L2M, DARPA is seeking to develop AI that learns continuously, adapts to new tasks, and is able to prioritize and discriminate in determining what to learn and when. L2M’s goal is to “have the rigor of automation with the flexibility of the human.”

When the master becomes the student

AI systems can share the flaws of their human creators. We are now dealing with a world where ideas are embedded within AI, and not always the right ones. In May 2016, Pro Publica reported an algorithm widely used by police forces across the US was skewed against African Americans. This meant the rates predicted of re-offending were more likely to be far higher for African American suspects, compared to those of other ethnicities. This small bias was replicated across many different systems, learned from itself, and only further reinforced the original racist bias.

Once we’ve given an AI a idea—once we’ve embedded a small implicit bias into its foundation—any effort to keep it from repeating and exacerbating that idea is like telling teenagers to just not have sex.

Think back to the conversation your parents had with you about the birds and the bees. Were you really listening, or was there some kind of hormonal override that wasn’t going to listen, no matter how well-intentioned your parents’ chat? Much like a teenager’s hormones, there isn’t necessarily an off button. Once an idea is put in place, we can’t simply hope for the best. Adolescent sexual behavior will often disregard the possible consequences (pregnancy, STDs) given the immediate benefits (fun, frivolity). Will AI listen to its well-intentioned masters? Or will it be so hopped up on the robot equivalent of hormones that it will just keep pushing forward, with little regard for the consequences?

If we don’t think about what we’re creating now, the future ahead looks bleak. We stand at a point not dissimilar to when Copernicus postulated Earth was not the center of the universe, a revolution that was both conceptual and far-reaching in its implications. Well aware of the possible consequences of publishing his views, Copernicus did so only posthumously, leaving it to others such as Galileo to bear the brunt of institutional opprobrium, sanction, and possible execution.

If humanity is no longer to be the core of our civilization, we should spend some time considering what our future is to be. We may not have Copernicus’s luxury of waiting for a future generation to solve the problem—as our future appears to be happening right here, right now.