200,000 volunteers have become the fact checkers of the internet

Founded in 2001, Wikipedia is on the verge of adulthood. It’s the world’s fifth-most popular website, with 46 million articles in 300 languages, while having less than 300 full-time employees. What makes it successful is the 200,000 volunteers who create it, said Katherine Maher, the executive director of the Wikimedia Foundation, the parent-organization for Wikipedia and its sister sites.

Founded in 2001, Wikipedia is on the verge of adulthood. It’s the world’s fifth-most popular website, with 46 million articles in 300 languages, while having less than 300 full-time employees. What makes it successful is the 200,000 volunteers who create it, said Katherine Maher, the executive director of the Wikimedia Foundation, the parent-organization for Wikipedia and its sister sites.

Unlike other tech companies, Wikipedia has avoided accusations of major meddling from malicious actors to subvert elections around the world. Part of this is because of the site’s model, where the creation process is largely transparent, but it’s also thanks to its community of diligent editors who monitor the content, said Maher, during an event hosted by Quartz in Washington DC on April 26.

Who are the editors?

“A lot of them have jobs, a lot of them are students, a lot of them are retirees…We have ‘Wikimedians’ who are academics who work at major universities. We have folks who are 14-year-olds who really just love what they do,” Maher said in a conversation with Quartz White House correspondent Heather Timmons.

Their fundamental belief, she said, is that by sharing knowledge, you can make the world a better place. “I always think of this as a very generous response from humanity to build something for which they derive no extrinsic benefit. It’s all intrinsic.”

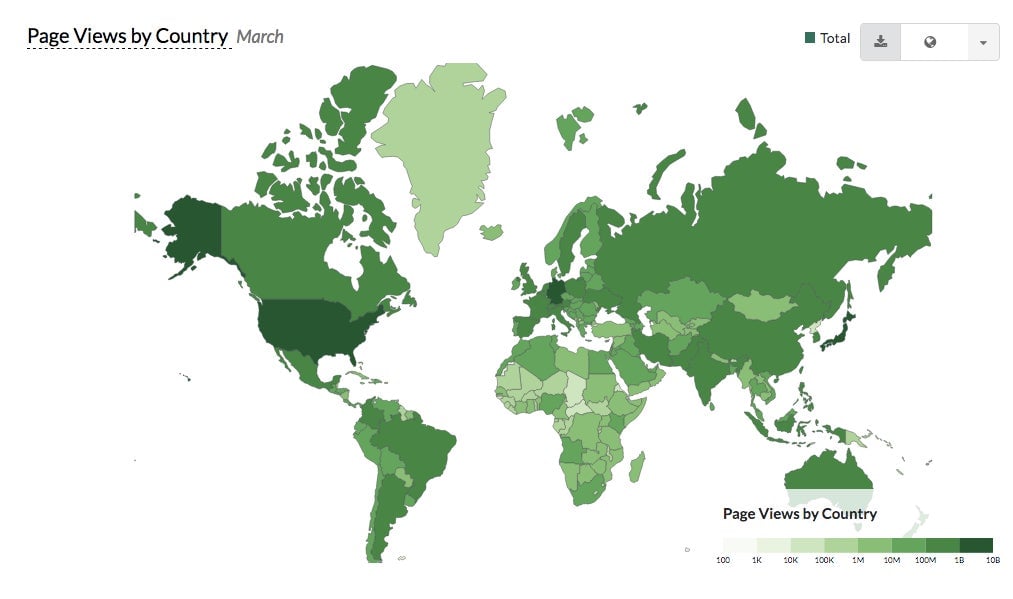

It’s an international community, Maher said, which rapidly grew until 2006 when its numbers dropped off. It has since been relatively stable. The next goal for the organization, she said, is building for emerging markets, where they see participation growing. The needs of an editor in Chad, and an editor in Washington DC are different, she said, with the key question now being: “How do we lower the barrier to entry?”

On what is truth on Wikipedia

Somewhat unwittingly, Wikipedia has become the internet’s fact-checker. Recently, both YouTube and Facebook started using the platform to show more context about videos or posts in order to curb the spread of disinformation—even though Wikipedia is crowd-sourced, and can be manipulated as well.

Maher said she doesn’t see Wikipedia as the arbiter of the truth. The aim is something else. “I don’t think of it as truth, I think of it as the best understanding of what we know right now. We’re constantly negotiating what truth actually means.”

As the world evolves, Wikipedia evolves. Editors are “are making judgments at a rate of 350 times a minute.”

“Wikimedians don’t believe in objectivity, it’s not ‘this thing says this’ and ‘this side says that’. They believe in this thing they call neutrality. They will look at the preponderance of information published by what they call reliable, reputable sources, and they’ll say: ‘the preponderance of information says that [for example] anthropogenic climate change—it’s a thing.'”

The bulk of such an article will be information on the science, and it will have a much shorter section on the political controversy. Likewise, the article about the concept of flat Earth, still a popular conspiracy theory, will not be portrayed as something that has legitimate scientific evidence behind it.

Since every edit on a Wikipedia page is recorded, it offers a view of how knowledge is changing. “I think the sociologists of the future will have a rich, rich mine to understand the way our common narratives shift as different voices have agency in these conversations,” Maher said.

On how malicious actors are rooted out

While no evidence of organized, widespread election-related manipulation on the platform has emerged so far, Wikipedia is not free of malicious actors, or people trying to grab control of the narrative. In Croatia, for instance, the local-language Wikipedia was completely taken over by right-wing ideologues several years ago.

The platform has also been battling the problem of “black-hat editing”— done surreptitiously by people who are trying to push a certain view—on the platform for years.

But, Maher says, longtime Wikipedia editors are able to distinguish this kind of activity, and conduct their own investigations to weed such actors out.

“We’re trying to understand how our content can be manipulated,” Maher said, when asked whether the group had looked into potential Russian manipulation on the platform. It appears that if articles are very large and popular they aren’t affected, she said, because so many members of the community are monitoring that content.

“As every change is made, someone is getting pinged somewhere in the world, saying ‘come look at this and make sure this is line with policy, that’s it’s not vandalism, that it’s a truthful contribution.'”

Instead, the problems arise at the margins. It becomes dangerous when the content travels to other platforms, because people use Wikipedia to create legitimacy. “This is happening on every single platform,” she said. But,“our platform has antibodies that protect it from happening on a mainstream level.”

Overall, quality on Wikipedia is created over time. “We move slow but we build things,” she said, flipping on its head Facebook’s infamous one-time motto “move fast, break things.”

On the role of AI

About 200,000 editors contribute to Wikimedia projects every month, and together with AI-powered bots they made a total of 39 million edits in February of 2018. In the chart below, group-bots are bots approved by the community, which do routine maintenance on the site, looking for examples of vandalism, for example. Name-bots are users who have “bot” in their name.

Like every other tech platform, Wikimedia is looking into how AI could help improve the site. “We are very interested in how AI can help us do things like evaluate the quality of articles, how deep and effective the citations are for a particular article, the relative neutrality of an article, the relative quality of an article,” said Maher. The organization would also like to use it to catch gaps in its content.

“Wikipedia is like a mirror held up to society, where gaps and biases exist in our society, gaps and biases exist in Wikipedia,” Maher said.