The push to create AI-friendly ethics codes is stripping all nuance from morality

Is it immoral to swerve your car into a mother and baby in order to avoid the six schoolchildren who ran into your path? What about if the mother and baby are your mother and sibling? It’s a difficult question, and it should be—philosophers have been debating similar ethical dilemmas for thousands of years, and they still haven’t come up with a set of definitively right answers.

Is it immoral to swerve your car into a mother and baby in order to avoid the six schoolchildren who ran into your path? What about if the mother and baby are your mother and sibling? It’s a difficult question, and it should be—philosophers have been debating similar ethical dilemmas for thousands of years, and they still haven’t come up with a set of definitively right answers.

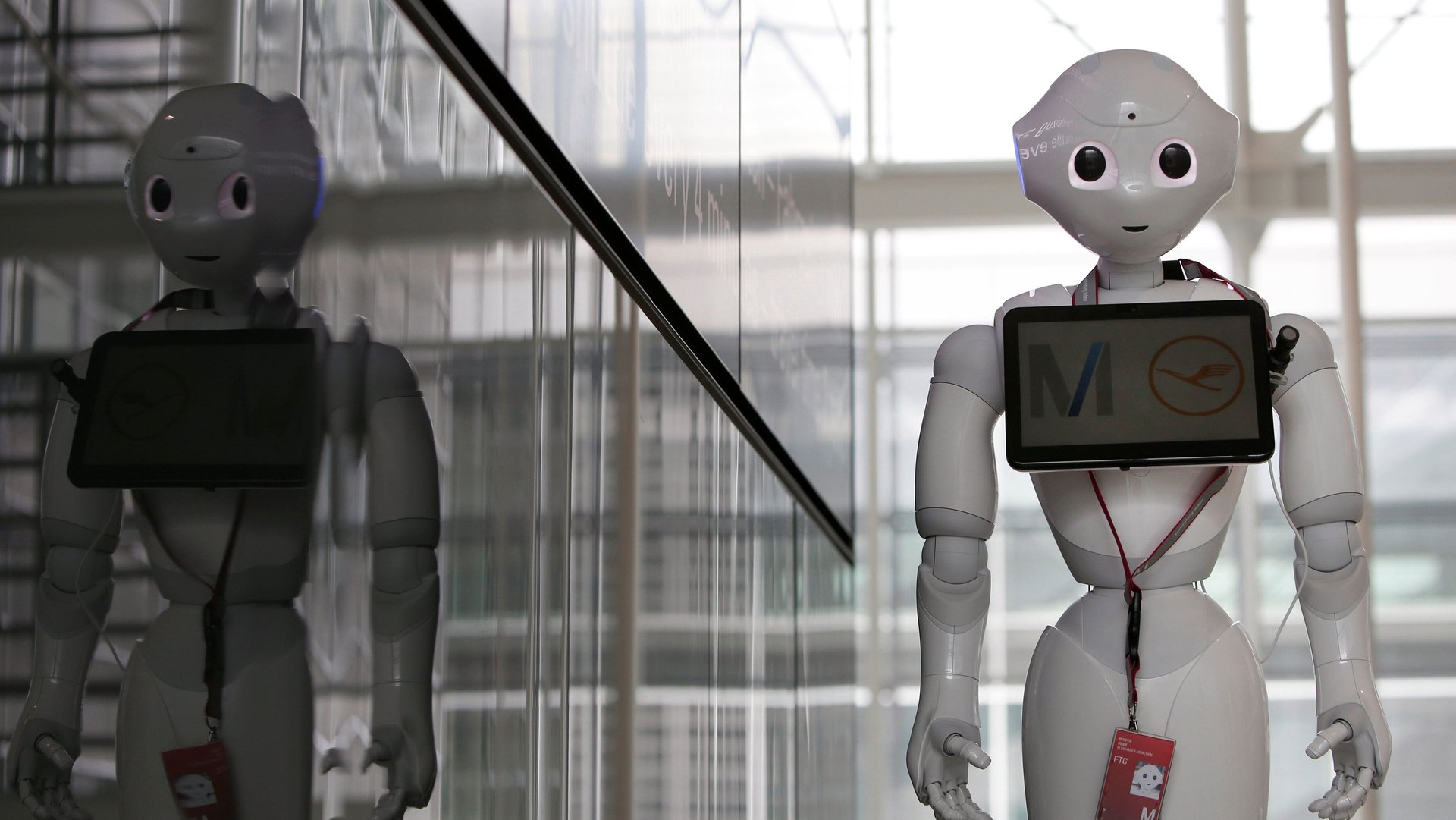

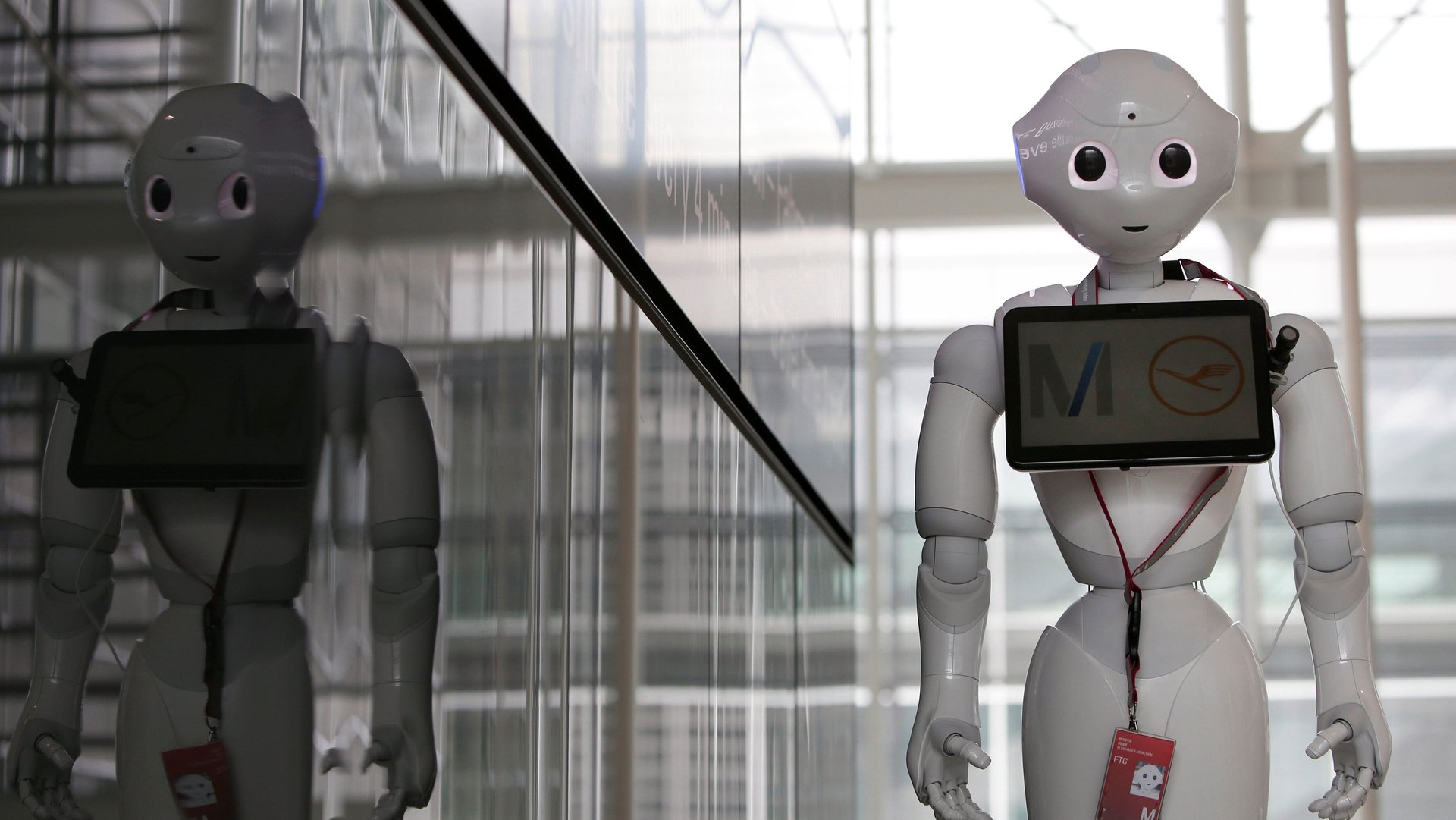

But the push to solve moral questions is being seen in a new, ever-more pressing light, now that machines will soon have to grapple with such complex scenarios. Self-driving cars, for one, could face the situation mentioned above, while AI used in hospitals and elderly care will likely face myriad ethical decisions. A paper led by Veljko Dubljević, neuroethics researcher at North Carolina State University, published yesterday (Oct. 2) in PLOS ONE, claims to establish not just the answer to one ethical question, but the entire groundwork for how moral judgements are made.

According to the paper’s “Agent Deed Consequence model,” three things are taken into account when making a moral decision: the person doing the action, the moral action itself, and the consequences of that action. To test this theory, the researchers created moral scenarios that varied details about the agent, the action, and the consequences. For example:

In one study of 525 participants that evaluated “low-stakes” scenarios, such as the syphilis example above, the researchers found that the action itself (in this case, whether the man lied or told the truth) was the most important factor in determining whether an action was moral. In a second study, which involved 786 participants evaluating high-stakes scenarios like the airplane scenario above that could result in death, the consequences were the strongest factor in determining whether an action was moral.

It’s a neat study, but it’s also potentially concerning that the researchers think it can be used as a basis of AI decisions. “This work is important because it provides a framework that can be used to help us determine when the ends may justify the means, or when they may not,” said Dubljević in a press statement. “This has implications for clinical assessments, such as recognizing deficits in psychopathy, and technological applications, such as AI programming.”

But there are many ways in which this study should not be used to determine whether the ends justify the means. For one thing, it blurs the question of how people do make decisions, versus how they should. The PLOS ONE paper examines how hundreds of people evaluate specific moral scenarios—but does not attempt to address whether all those people are making the right decision. Looking back on history, where slavery was once considered acceptable, or even contemporary values, where many seem to think that sexual assault is acceptable, it’s clear that millions of people can collectively make morally problematic decisions.

The study also only evaluates a handful of specific scenarios, which means it has limited use in shaping AI ethics. In the airplane scenario presented, it seems reasonable to state that it’s moral to attack someone if doing so prevents multiple murders. But would it be moral to, say, torture a child, if that prevented the destruction of an entire city?

Hopefully, artificial intelligence will never face the question of whether to torture a child. But it’s nevertheless concerning that moral models intended to be of use to AI are presenting such over-simplified notions of ethics. AI will, potentially, have the power to make far more drastic moral decisions than most humans: Machines, after all, have the power to make the same decision over and over again, leading to potentially massively destructive consequences. We have to be sure that they won’t gloss over the nuances.