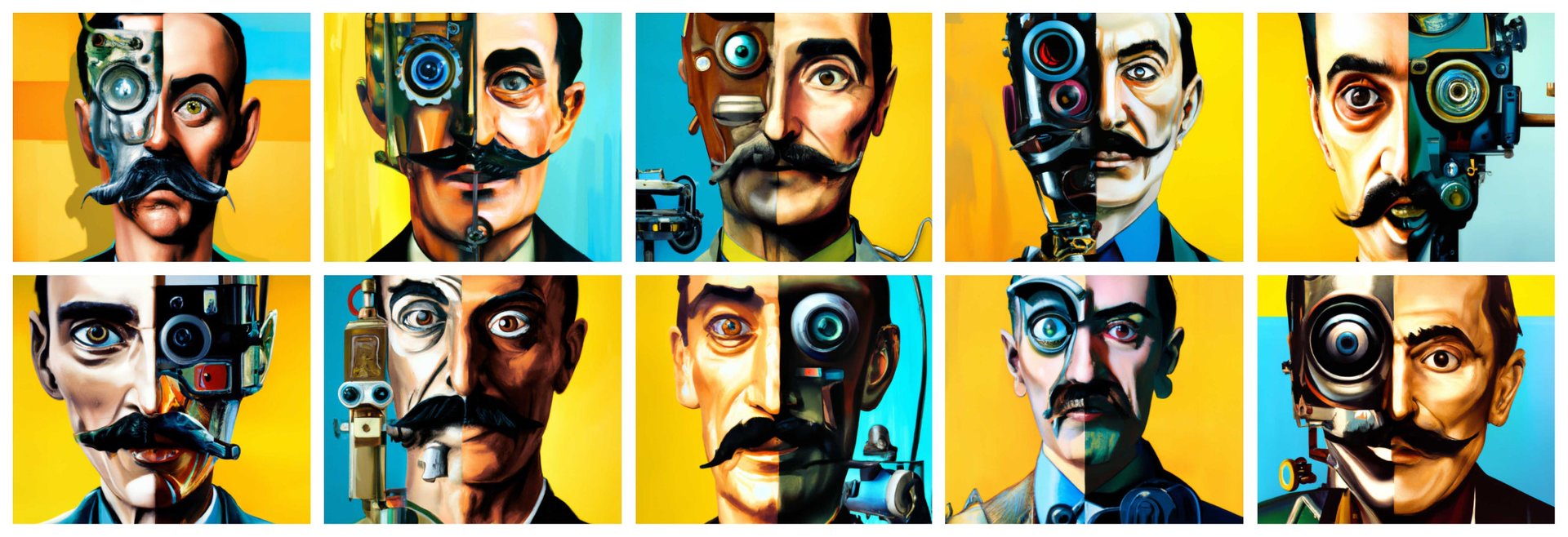

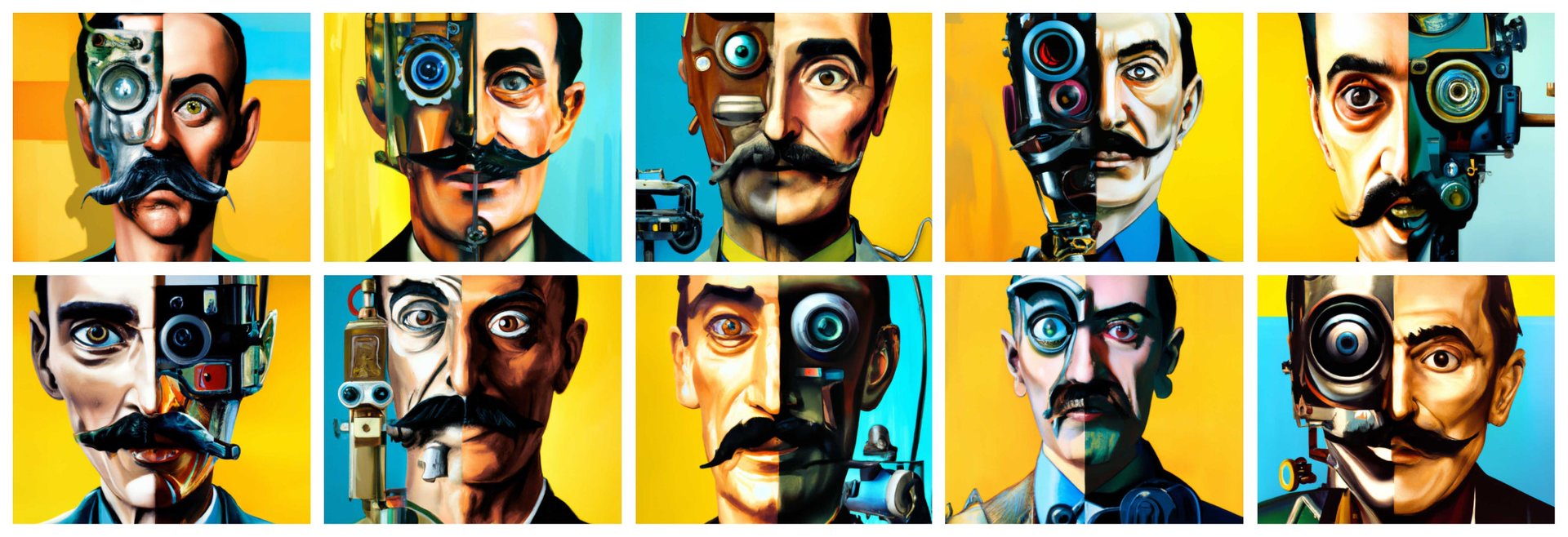

The best examples of DALL-E 2’s strange, beautiful AI art

The research group OpenAI launched in 2015 with $1 billion from Elon Musk and other Silicon Valley titans and a broad mission to create a set of AI tools that “benefits all of humanity.” Microsoft donated another $1 billion in 2019 to help OpenAI develop “artificial general intelligence with widely distributed economic benefits.”

The research group OpenAI launched in 2015 with $1 billion from Elon Musk and other Silicon Valley titans and a broad mission to create a set of AI tools that “benefits all of humanity.” Microsoft donated another $1 billion in 2019 to help OpenAI develop “artificial general intelligence with widely distributed economic benefits.”

So what do $2 billion and seven years of research for the betterment of all mankind get you? For one, a really excellent picture of an astronaut riding a horse.

This horse, this astronaut, and these stars do not exist in the real world. All are the invention of a computer model created in April by OpenAI named DALL-E 2 (a portmanteau of surrealist artist Salvador Dalí and Pixar robot WALL-E). The model learned to associate words and images from a database of hundreds of millions of pictures and labels describing their content. If you type in a simple phrase—such as “a photo of an astronaut riding a horse”—DALL-E 2 will generate an image based on its understanding of what “astronaut,” “riding,” and “horse” mean. It will even fill in details based on its ability to associate related concepts; astronauts, for instance, tend to appear against a backdrop of stars.

OpenAI has developed similar projects in the past and released them to the public. Anyone can use OpenAI’s latest language model, GPT-3, to generate stories, articles, and poetry based on simple descriptions. With a little coding expertise, you can use Jukebox to invent full-length songs with lyrics in any style. DALL-E 2 is still in beta testing, but you can sign up for a spot on the waitlist to use it; OpenAI is sending invites to about 1,000 people a week.

OpenAI hopes the public will use tools like DALL-E 2 in whimsical, creative ways, like envisioning a pleasant evening for a pair of partying avocados.

But it’s easy to imagine powerful image generation tools being abused to create deepfakes, political disinformation, revenge porn, and so on. DALL-E 2’s developers have tried to head off that possibility by creating policies that prohibit users from generating images that contain hate, harassment, violence, sex, or nudity and blocking certain keywords like “shooting.”

For now, the DALL-E 2 sample images circulating on social media have all been thoroughly safe-for-work. Here are a few of our favorite examples, culled from an OpenAI Twitter thread, the DALL-E 2 Instagram page, the Twitter account @Dalle2Pics, and DALL-E 2 users on social media.

DALL-E 2 empowers animals to live their best lives

DALL-E 2 serves up surreal foods

DALL-E 2 recreates ancient artwork

DALL-E 2 cursed images

But beware: Not every DALL-E 2 artwork is an inspiring and beautiful leap forward in the field of AI. Some are extremely cursed, and they remind us that humanity cannot always be trusted to wield such fearsome power.