What we miss about AI when we’re worried about killer robots

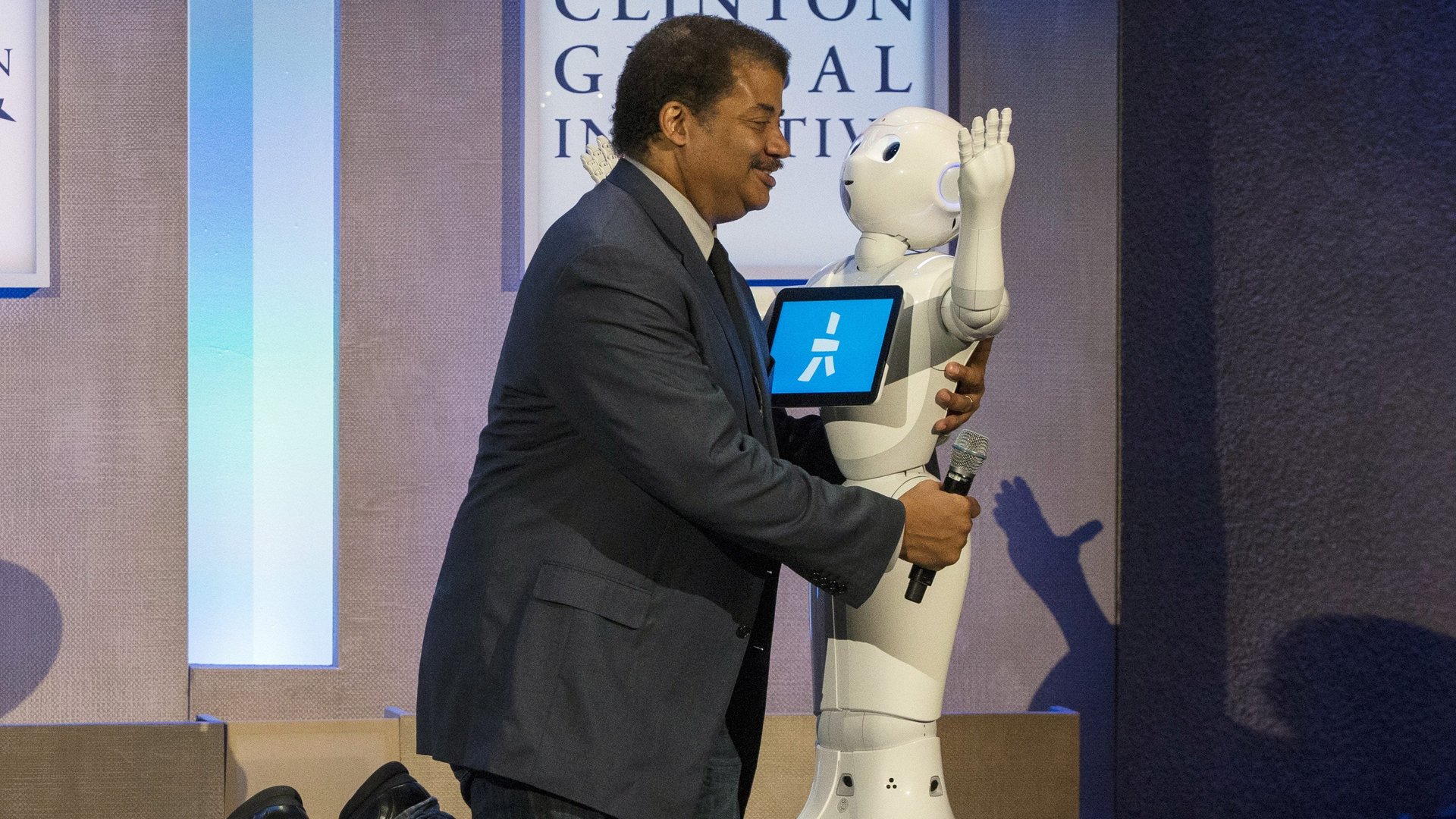

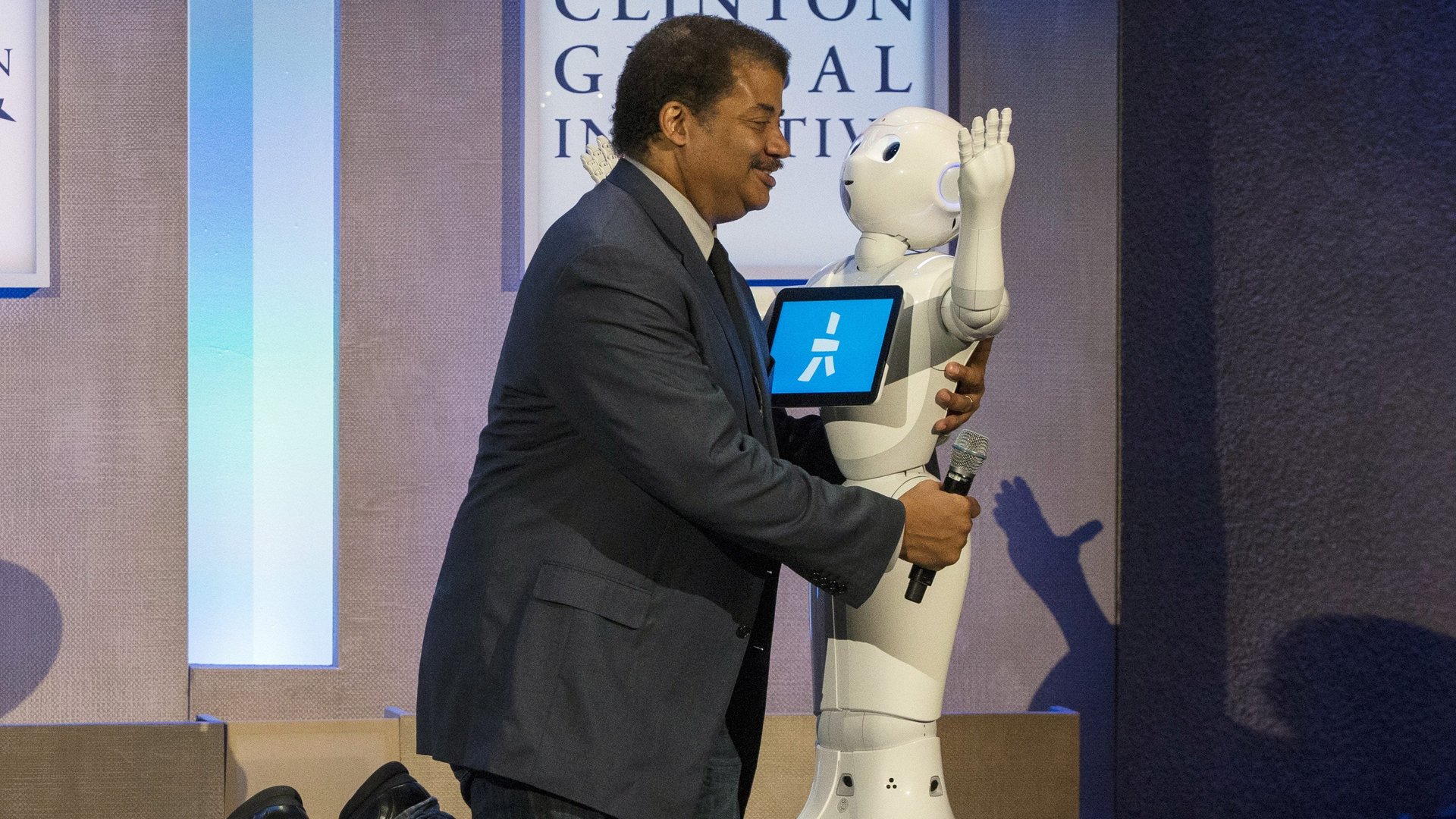

At the American Museum of Natural History in New York last night (Feb. 13), astrophysicist Neil deGrasse Tyson took the stage with some of the world’s foremost experts in artificial intelligence, representatives from Google, IBM, MIT, iRobot, and the University of Michigan.

At the American Museum of Natural History in New York last night (Feb. 13), astrophysicist Neil deGrasse Tyson took the stage with some of the world’s foremost experts in artificial intelligence, representatives from Google, IBM, MIT, iRobot, and the University of Michigan.

After they were all seated, Tyson had the lights dimmed, and then promptly undermined why the panelists were there in the first place. Tyson played a video from Boston Dynamics showing two four-legged robots, one with a long arm that opened a door for the other. The crowd murmured and cheered as the robots overcame the obstacle. Tyson implored the audience to read the comments on the video at home later.

“The first comment was ‘We’re all going to die!’ but then the second comment was ‘Those were polite robots!'” he said. “So for me this captures the split in people’s attitudes, and understandings, and reactions when they become eyewitness to AI.”

There’s a fundamental problem with that reasoning: People interact with artificial intelligence every day, in ways more significant that robo-dogs opening the door for each other. We are all eyewitnesses when we open Facebook or search for something on Google. Every time we use a navigation app a machine learning algorithm is analyzing traffic patterns and train times and charting the most efficient route. There are artificial intelligence algorithms being used by judges today to determine whether a person might commit another crime. Police departments are using algorithms to predict where crime could happen in the future—if we want to talk about Minority Report, it’s here. It’s not Tom Cruise, there are no fancy computer glass monitors, but the potential effects on minority populations are just as real. Airports are starting to use artificial intelligence for security, and recent reports out of China detail police wearing facial recognition cameras powered by AI to automatically flag criminals on the lam.

Each of these applications of artificial intelligence is here in the present, and each of them drag a litany of thorny questions in its wake. How can Facebook be gamed to change a person’s opinion? Are the algorithms used in criminal justice fair? These are more real to the average person—who uses Facebook and Google and could be arrested and sentenced according to these algorithms—than any robot that Boston Dynamics could make in the next few years.

It’s naturally important and interesting to think about how AI might be used to cause harm. But human enslavement by robots is a trite conversation when AI software trained to autonomously hack other computers is something that exists today, set to task by malicious humans.

Tyson’s questions over the course of the night—because despite it being billed as a debate, there was no central question posed to be debated—had a similar theme. He plucked ideas from science fiction, like Isaac Asimov’s Three Laws of Robotics, and asked iRobot cofounder Helen Greiner if she used the those principles in her company’s robots. Greiner gently answered that, in the real world, we don’t have the luxury of coding abstract ideas like never harming humans to simple mathematical machines.

At one point, Tyson detailed plot points from the Will Smith movie I, Robot, and then asked if the plot device that led to the movie’s robots turning evil meant that Boston Dynamics robots would one day get mad at humans for beating them up. It’s a question that fundamentally misunderstands the way that artificial intelligence algorithms are constructed and trained; there’s no consensus that artificial consciousness is possible, an algorithm would only learn what it had been shown by humans, and algorithms don’t have a will to live or emotions or feel kinship to other algorithms. But, of greater consequence, the question draws focus away from ideas like Google already trying to make medical decisions inside hospitals, based on techniques nobody really understands.

It’s not clear if Tyson was simply trying to frame the conversation in ways most easily related to by an audience of laypeople. But fears of Skynet planted by the likes of Elon Musk (whose Tesla business owes a great deal to AI) are a distraction from the real ethical questions that should trouble us. During the Q&A session after Tyson finished his questions, an audience member asked how companies like IBM could keep the bias inherent in humans out of machines—IBM’s Ruchir Puri explained that his company was trying to come up with algorithms to do just that. This is true of IBM, but the problem is far from solved, as inherent bias exists in everything from how AI processes language to facial recognition. When the audience member pressed further, asking about unknown biases that we might not observe in data—a totally valid question—Tyson said that much of science was focused on removing bias from data and not to worry.

“You should rest easy tonight,” he told the audience member as they walked away from the microphone near the stage. Many computer scientists and AI researchers would disagree with that sentiment.

Science fiction can be an incredible tool for getting people interested in science and artificial intelligence; Greiner said during the panel that watching Star Wars and seeing R2-D2 made her want to be a roboticist. But when science fiction becomes the only basis for discussion on real topics, it can supplant meaningful discussion about what’s happening around us.

And it did last night.