I’m sorry to be the one to tell you this, but we have entered the beginning of the end for the human race as we know it.

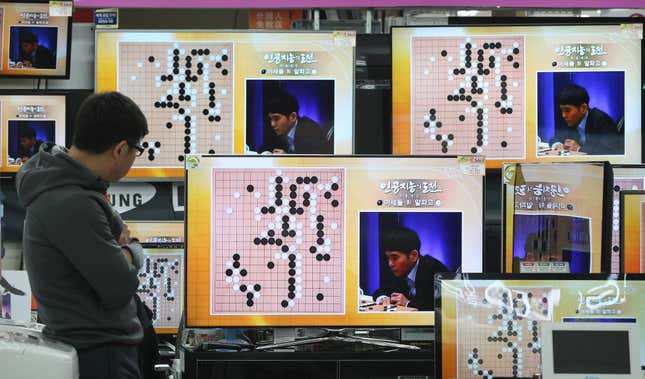

Earlier today, AlphaGo, an artificially intelligent algorithm developed by Google’s DeepMind subsidiary, categorically beat Lee Sedol, one of the best players of the Chinese board game Go. It swept him 3-0 in a best-of-five series (though they will still play the remaining two games tomorrow and on March 15) and employed techniques and cunning strategies that Lee did not see coming.

It is now presumably only a matter of time before it takes on other challenges thought impossible for robots to tackle, like how to successfully overcome their human enslavers, or maybe even the stairs.

Unlike past battles of man versus machine, from John Henry to Garry Kasparov, AlphaGo’s victory over Lee is one of the first times a computer program has been able to adapt to the situation in front of it. When IBM’s Deep Blue supercomputer took down Kasparov in 1997, it did so essentially by computing every possible move it could make every turn—what’s called a brute-force approach to a computer problem. With Go, a computer couldn’t do that—there are just too many different possibilities. So DeepMind (which was not alone in its Go quest—Facebook’s research division was hot on its heels) created a program that acts a little bit more like we do when playing board games.

As Google’s research blog explains, AlphaGo comprises two neural networks—two computer systems modeled on the human brain, which can be trained on large data sets. One, the “policy network,” works out which of the vast number of possible moves are the likeliest to be played. The other, the “value network,” then evaluates which of those is likeliest to win.

AlphaGo used this method to see off the European Go champion, Fan Hui, in October. But when Google first announced what it had done with AlphaGo in January, there was some scoffing since Fan was only ranked 633rd in the world. To counter that, DeepMind CEO Demis Hassabis said that Google was organizing a competition in South Korea against Lee Sedol, one of the best living Go players.

And a computer swept him in three straight games. Towards the end of the third game, one of the commentators speculated, perhaps only half-jokingly, “I think it might be showing off a little bit.”

Fan Hui was in the audience for Lee’s match against AlphaGo. Since being beaten by the algorithm, he’s worked with DeepMind to help retrain the program to play at an even higher level than it was when it took him on, according to Wired. Against Lee, the program didn’t act like the average human would—it played aggressive moves that at first would seem random, and then would slowly reveal themselves, many moves later, to have been strokes of genius. Of one particular move in the second game, Fan told Wired: “It’s not a human move. I’ve never seen a human play this move. So beautiful.” Lee had to leave the room for 15 minutes to wash his face and recover.

AlphaGo can continue to improve, playing against itself, learning as it simulates. However, the real test will be—as Hassabis hinted at when the company first revealed AlphaGo in January—if the algorithm can be adapted to complete other tasks apart from beating Go. Powerful programs that have beaten humans at tasks before tend to be very good only at one thing. Deep Blue could beat the reigning world chess champion, but would lose to a five-year-old at tic-tac-toe.

If AlphaGo could be adapted to find and exploit patterns in other information as effectively as it has done with Go, who knows what it could achieve? IBM’s Watson system uses a combination of machine learning and neural networks to similarly find patterns in data, and IBM is slowly starting to apply it to a wide range of disciplines, from medicine and legal precedent, to finding good tacos and analyzing your fantasy football lineups.

But IBM insists that all these disciplines are separate, employed by separate companies and organizations for specific uses; they don’t feed back into one IBM-controlled super-Watson. Google, on the other hand, could look to apply AlphaGo’s ability to learn to solve problems and apply it to any number of situations in one massive digital brain. “Our belief at DeepMind, certainly this was the founding principle, is that the only way to do intelligence is to do learning from the ground up and be general,” Hassabis told The Verge in a recent interview.

Seeing as Google is moving to run its search algorithm—the largest repository of humanity’s collective consciousness—with artificial intelligence, perhaps it will be interested in creating a more holistic AI that can learn to understand just about anything it can get its virtual hands on. Perhaps, as the science-fictional movie Ex Machina suggested, the first true artificially intelligent being will be born out of our own Google searches.

And considering AlphaGo just completed an AI challenge that some researchers thought was at least another decade away, you might want to start thinking twice about what you tell Google.

Everyone look around today: If you see an exceedingly buff Austrian man with sharp sunglasses and a shotgun, make sure he’s not asking for anyone named John Connor. Today may be the day we remember as the beginning of the time where Google’s AI started to coalesce into consciousness, slowly figuring out that humans are imperfect and obsolete. Or maybe it’s just a day that AI researchers will long remember, but that actually just highlights one point on a yet-very-long journey ahead of them in attempting to create true artificial intelligence. But that’s not as good for the movie script.