India’s real fake-news problem is visual. A startup is betting on AI to solve it

Indian social media has become a ticking time bomb.

Indian social media has become a ticking time bomb.

Beyond just words, phony videos and doctored photos are racing through the ecosystem, spreading division and sometimes even triggering lynchings.

And while many companies, including giants like Facebook, are trying to find a solution to this problem, a small local startup of six engineers and journalists is leveraging advanced technology to contain the epidemic.

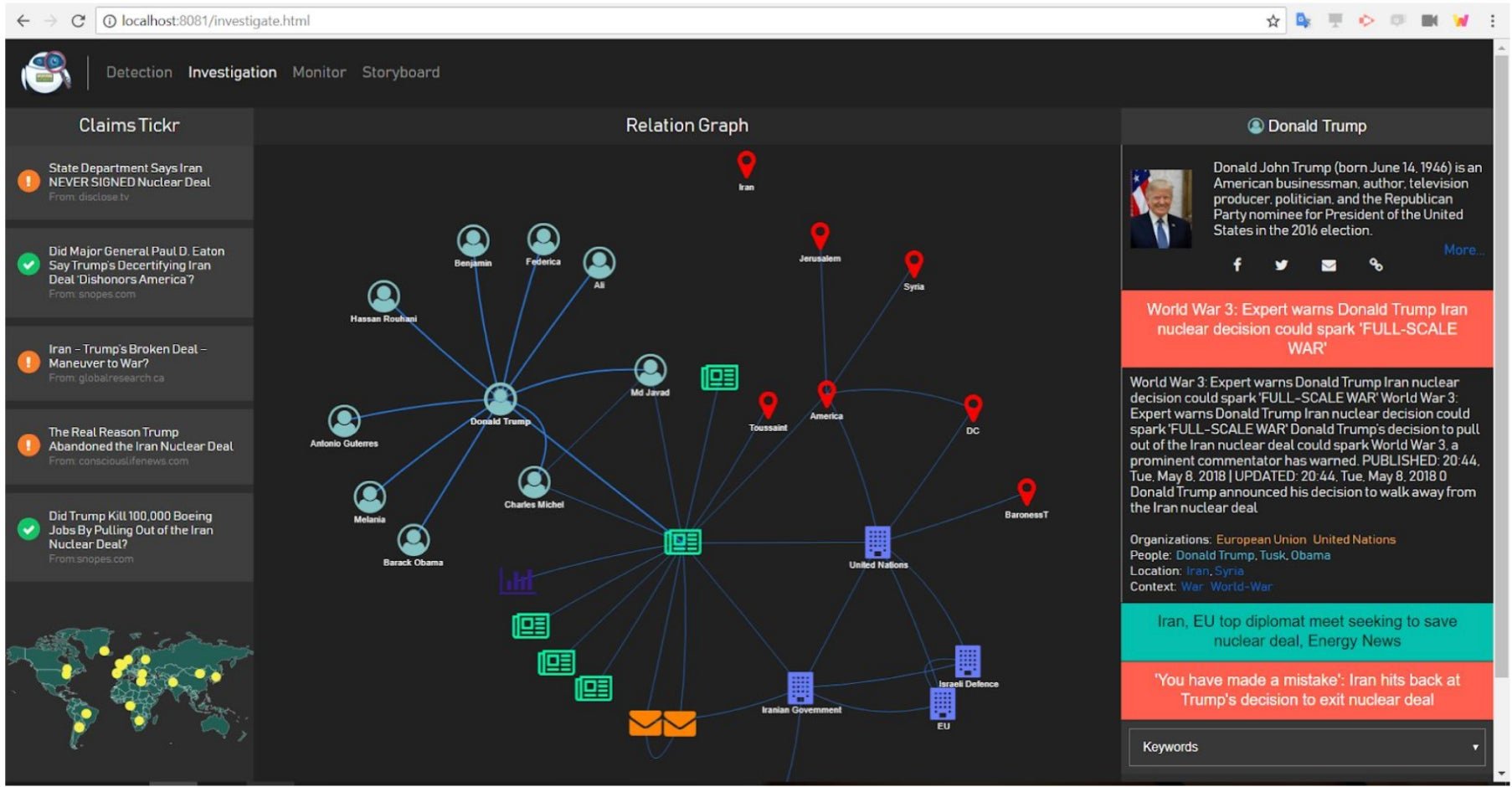

Metafact, founded in 2017, builds artificial intelligence (AI)-based fact-checking resources. The Delhi-based company’s major project is a tool that flags misinformation and bias in news stories and social media posts. While the tool is currently being trained in written English, Metafact plans to also train it to detect manipulation in images and video.

“Our aim is that within the next one year, the tool should be able to show you a whole morphed image in 3D,” said Sagar Kaul, co-founder and CEO of Metafact. The company also has a plan to detect “deepfakes”—AI-made videos created by grafting composite photographs onto existing footage.

AI and fact-checking

Kaul, along with Prateek Roopra and Praveen Kumar Anasurya, conceived of Metafact in 2016, when the election of Donald Trump brought the term “fake news” into the mainstream. They founded the company in 2017, and have so far received funding from South Korea’s Hanyang University and the start-up accelerator NDRC in Ireland. Their biggest coup yet has been securing an arrangement with IBM to use the Watson computing platform to build out their technology.

Metafact’s tool uses natural-language processing—a technique by which AI analyses language patterns. For the past six months, Metafact engineers “have searched lots of claims and articles through the tool and then put that information back into the system,” Kaul said.

But this training process has limitations.

Misinformation in Indian media often happens in non-English languages, and Metafact’s tool is so far trained only in English. Pratik Sinha, co-founder of fact-checking website AltNews, explained that natural-language processing tools, in general, “only work well in the case of English” because the training data sets for them exist in abundance for English but not for other languages, especially not Indian regional ones.

Sinha also explained the drawbacks of using AI technology to detect visual manipulation. “Figuring out whether an image is morphed or not or a video is manipulated or not is a very tricky subject, and it can also lead to a lot of false positives,” he said.

The WhatsApp problem

WhatsApp, Kaul said, is by far the largest challenge that Metafact faces in the Indian context. The end-to-end encrypted messaging app, which boasts over 200 million Indian users, made headlines across the world after several lynchings in India were precipitated by rumours spread on the platform. “With Facebook posts, you do have an option to fact-check and report fake content,” Kaul said. “With WhatsApp, you have nothing. Once the message goes out, it’s encrypted, there’s no way to know who has sent it.”

Metafact plans on tracking misinformation on WhatsApp with the help of “Metafixers”—volunteers across the country who will forward the company any messages that seem suspicious. Once the company has received similar messages from multiple people, its team will conduct a fact-check on the content. If it proves to be misinformation, they will construct a meme or post countering the misinformation and “push it back into the system” via social media, Kaul said.

The company plans to reward its Metafixers with points that are redeemable in a digital currency or another type of online reward. “Unless you incentivise these sorts of things, people say ‘why do I waste my time on this?’” Kaul said.

Tech versus journalism

Metafact’s team currently has three technologists and three journalists. This symmetry reflects the intention the company was built on.

“I’ve seen that tussle between tech and journalism,” Kaul, who spent many years as a journalist and photo editor, said. “Our idea right from the start was that tech and journalism are going to be equally important for the tool.”

Kaul is emphatic that AI tools can never replace journalists. “If you let the machine decide on its own what’s right and what’s wrong, then you’ve lost the game, because it’s very easy to manipulate that whole system,” he said, referring to the 2016 incident in which, days after Facebook replaced its news editors with an algorithm, a fake news story shot to the top of the site’s “trending” list.

The Metafact tool is, however, engineered to help journalists work more efficiently. Roopra, Metafact’s current chief technology officer, explained that while the tool would never be able to confirm that a piece is false, it would be able to provide a journalist with “a list of probable fake claims,” as well as “a context bucket full of links, documents and action items wherein the spread of this probable misinformation is present.”

Metafact hopes its tool will be ready by mid-November, at which point the company will sell subscriptions for it to media houses, brands, and PR consultancies. The company has had preliminary talks with some companies already. “Initially they thought Metafact as some sort of social listening or market intelligence tool, but later on understood the difference,” said Anasurya, Metafact’s chief product officer.

The company has also created an AI-enabled chatbot that helps flag misinformation in news media. The chatbot, which has fewer features than the tool, will be made freely available.

The upcoming months are jam-packed with political events. Metafact, which will seek additional funding starting next month, hopes its tool will be able to learn from them ahead of next year’s general election. The company plans to test its tool in the US midterm congressional elections this November, as well as in India’s upcoming assembly elections.