AI companies are too cheap to pay for legit books

Companies like Meta and OpenAI have been using pirated copies of books to train their AI models

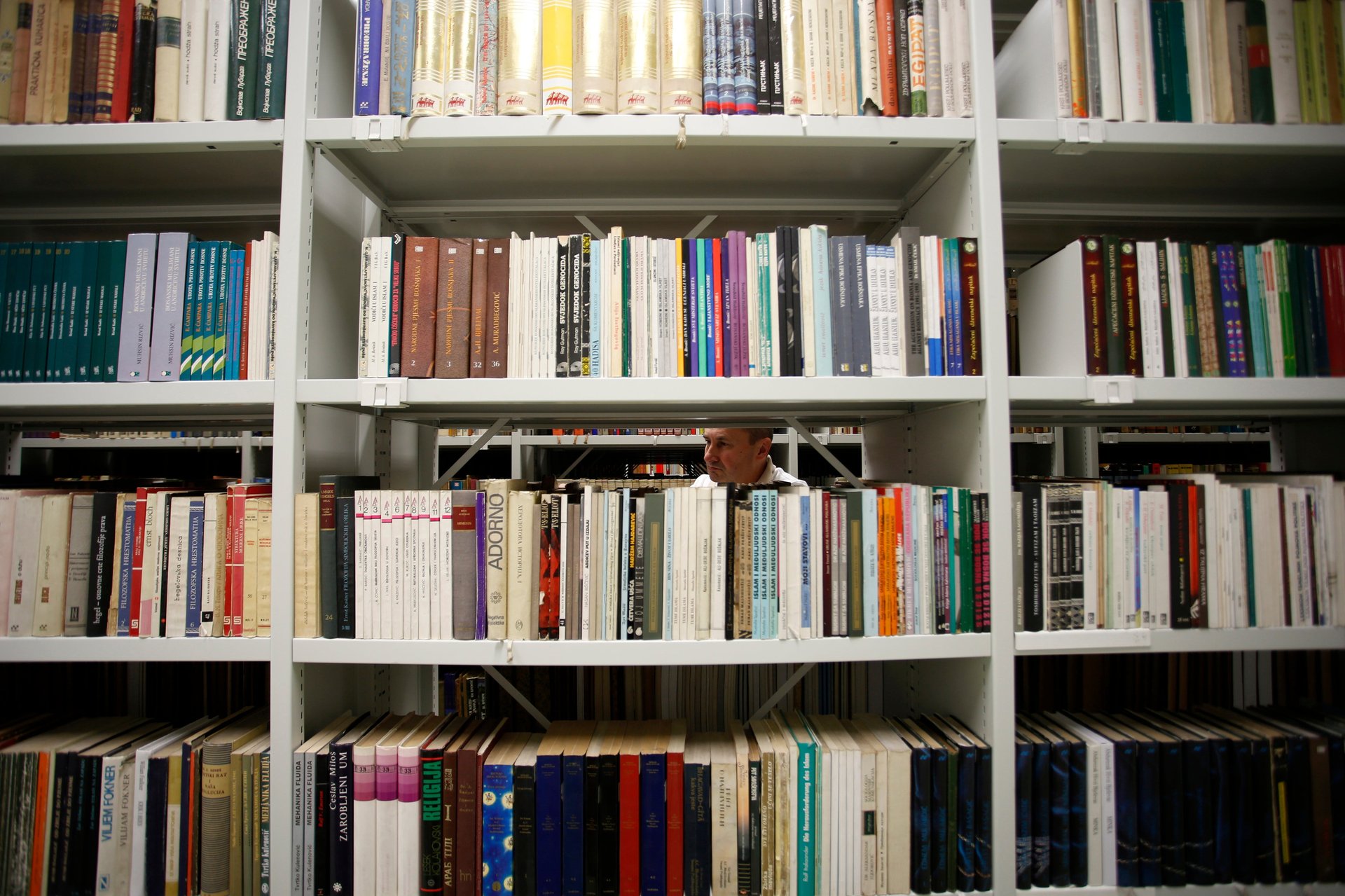

Big tech companies are using published books to train their artificial intelligence models—not just without obtaining authorization from their authors, but also by pirating the books and denying the authors their sales royalties.

Suggested Reading

In a study published on Sunday (Aug. 20), the Atlantic revealed how OpenAI, Meta, and other tech companies use pirated books from shadow libraries, paying nothing for the content that trains and powers their large language models.

Related Content

OpenAI uses Books1 and Books2, two corpuses of books drawn from the internet, to train its models. Roughly 15% of the training set for GPT-3 comes from these databases. Court filings by authors who have sued OpenAI claim the company populated Books2 with pirated books from shadow libraries like Library Genesis (LibGen), Z-Library (Bok), Sci-Hub, and Bibliotik.

Similarly, a dataset used by Meta, and analyzed by the Atlantic, was found to hold more than 170,000 books, most of them published in the last two decades. These books, part of a corpus called Books3, were also used to train other language models. “Pirated books are being used as inputs for computer programs that are changing how we read, learn, and communicate,” Alex Reisner, the Atlantic writer, noted. “The future promised by AI is written with stolen words.”

A series of copyright lawsuits has already been mounted against OpenAI, the creators of ChatGPT , for its use of authors’ content without consent and compensation.

Big Tech is profiting off pirated books and cheap content

The piracy habit illustrates Big Tech’s tendency to pinch pennies wherever people can be exploited. A software engineer at OpenAI, who works on content from these books, makes an annual salary of up to $370,000. Many book authors, though, never see that kind of income from their writing in their lifetimes, and yet their work is being used to refine and commercialize AI engines.

Although OpenAI’s valuation rose to $29 billion in June, it has previously also been accused of hiring a California-based agency, Sama, that allegedly underpaid Kenyan workers to perfect ChatGPT. Kenyan workers made between $1.32 and $2 per hour, a small fraction of California’s minimum wage of $16.99 per hour.

Meta, too, has found itself under fire for similar reasons. This past March, Meta indicated that its largest investments henceforth will be in AI, and a month later, it announced that it would spend $33 billion to “introduce AI agents to billions of people in ways that will be useful and meaningful.” In June, it launched Llama 2, its latest large language model for commercial use.

But despite these grand announcements of expenditure, Meta has been hit with accusations that its subcontracted employees, recruited through Sama, work in poor conditions. Last year, one such former employee sued Meta and Sama in Nairobi, alleging labor exploitation and the suppression of union organizing efforts.

Google has invested $300 million in Anthropic, a company founded by ex-OpenAI employees and progenitor of Claude, an AI chatbot that rivals ChatGPT. It is unclear clear how much Google has invested in its own Bard chatbot, which has been released to a wide audience in more than 40 languages.

Yet many of the people hired to train Bard are reportedly overworked, undertrained, and underpaid. Some contractors, pressured to deliver complex text audits within short durations, make as little as $14 per hour. In contrast, the median salary for an AI engineer at Google is $230,745.