At its annual Worldwide Developers Conference yesterday, Apple announced updated versions of its mobile and desktop operating systems, including a focus on search, powered by its Siri “assistant” technology.

Apple is moving search closer to how we actually think—the stream of consciousness of errant thoughts and things that we want to know right this second, regardless of what device we’re on, or where we are—rather than having to fire up a web browser and manually conduct a Google keyword search.

Google’s search dominance is arguably nearing its peak, and instead of fighting head on, Apple seems to be more interested in giving people access to information as soon as they want it. In other words, stepping between its users and Google.

Natural search on desktop

Apple has refined its Spotlight search engine for years, and now OS X will pull in information from a range of new sources beyond what’s on the computer. By pressing command-spacebar, Mac users will be able to get sports scores, directions, weather, and transit information, along with other snippets of information.

But Apple’s doing more than just bringing Siri to the desktop: Users can now search in their own words. If you want to see what you were working on last week, just type “Documents I worked on last week” into a Mac search bar, and the OS will pull them up. As Apple says on its preview page: ”When you’re looking for something, just type it the way you’d say it.”

This function—which sounds a lot like the “natural language” search found in Facebook’s Graph Search—will be available in seven languages at launch. Being able to type what you’re thinking, how you’re thinking it, right into Spotlight without the need for a search page is almost certainly a shot across Google’s bow.

Siri is all over mobile

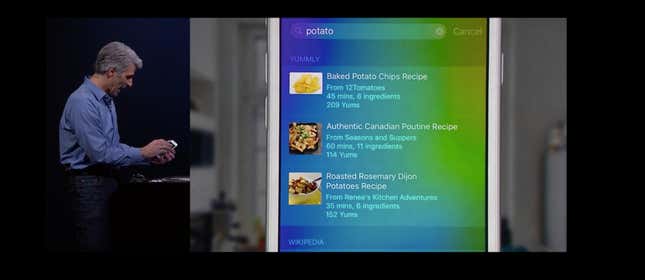

With iOS 9, Apple has given Siri the ability to take things into context, including apps on your phone. You can ask her to start playing a specific song, or to remind you to finish up an email you’re working on later in the day. Search results increasingly jump right into iOS apps. This means a user could search for a recipe on Spotlight and be pulled right into the pertinent information in a cooking app, without ever having to open a web browser or use a Google service.

Siri is also powering a new Spotlight search screen on iOS devices, meaning everything Siri can find, you can now access from the homescreen. This screen is also going to be pre-filled with contextual suggested information—contacts you might want to call, traffic conditions, apps you might be thinking of opening, and even news articles that you might be interested in—based on how, when, and where you use your phone.

Apple isn’t the only one thinking outside the search page. Google announced an update to its digital assistant at its own developer conference last month, where search becomes an almost passive activity. For example: If you get a text about a restaurant, you can hit the home button, and Google Now will serve you up an information card about the restaurant. It’s a behavior that Google would love to get its Android users hooked on.

Bigger picture, Apple and Google are both pushing toward a future where we don’t need plain-old web search engines. The key difference: Apple’s core business—selling iPhones and Macs—is less tied to serving advertisements on search pages than Google’s, which generated 68% of its revenue last year from ads on its own websites.