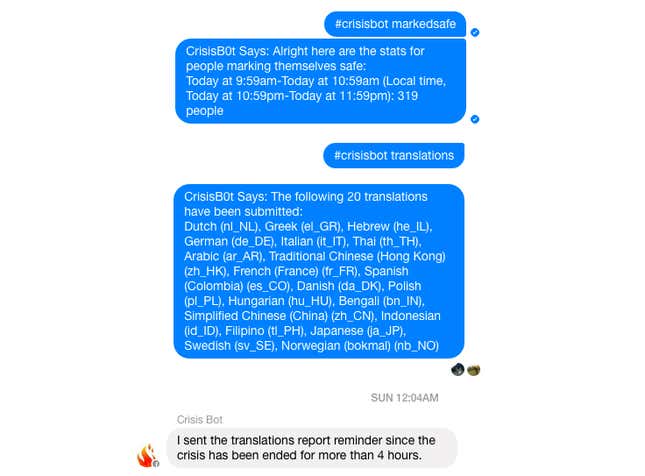

Facebook has built an internal-only Messenger bot called “Crisis Bot” to monitor what happens during major crises, when its Safety Check feature is activated.

Safety Check is a tool that Facebook has periodically deployed that allows users to broadcast to their Facebook connections whether they’ve been dangerously affected by a crisis in their vicinity. Facebook engineers use Crisis Bot to check on how a new Safety Check activation is going, and to ask for various data-sets, like how many people have been marked “safe.”

“This allowed us to migrate our entire launch monitoring process to mobile and colocate it where the discussions are happening — in Messenger itself,” Peter Cottle, Safety Check’s creator, wrote in a blog post.

Facebook’s announcement of Crisis Bot yesterday, June 2, also included news on how it’s revamping Safety Check. It’s moving away from the largely manual operation of Safety Check to a highly automated system that will allow the feature to be used far more frequently, Cottle wrote in his post. The feature was activated 11 times from the time it was introduced in Dec. 2014 through 2015, and 17 times this year alone, Cottle wrote. The availability of Safety Check invitations will be determined partially by an algorithm, he told Techcrunch, but Facebook plans to let users control sending Safety Check invitations to their Facebook friends.

Facebook has been criticized for how it deems which crises are important enough for a Safety Check activation. For instance, the feature was activated during the Paris terror attacks last November, but not during bombings in Beirut one day prior. The feature was not activated during attacks on a Côte d’Ivoire resort town in March this year, or during explosions in Jakarta in January.

Taken together, the new mechanics appear to insulate Facebook from criticism about exercising its judgment on whether to manually activate Safety Check in a given instance or not. Just as Facebook relied on the notion of algorithms being neutral in the debate over the political bias of its Trending Topics feature, it’s algorithms to the rescue again with Safety Check, another tricky instance where it’s been able to defer careful human judgement to automation.