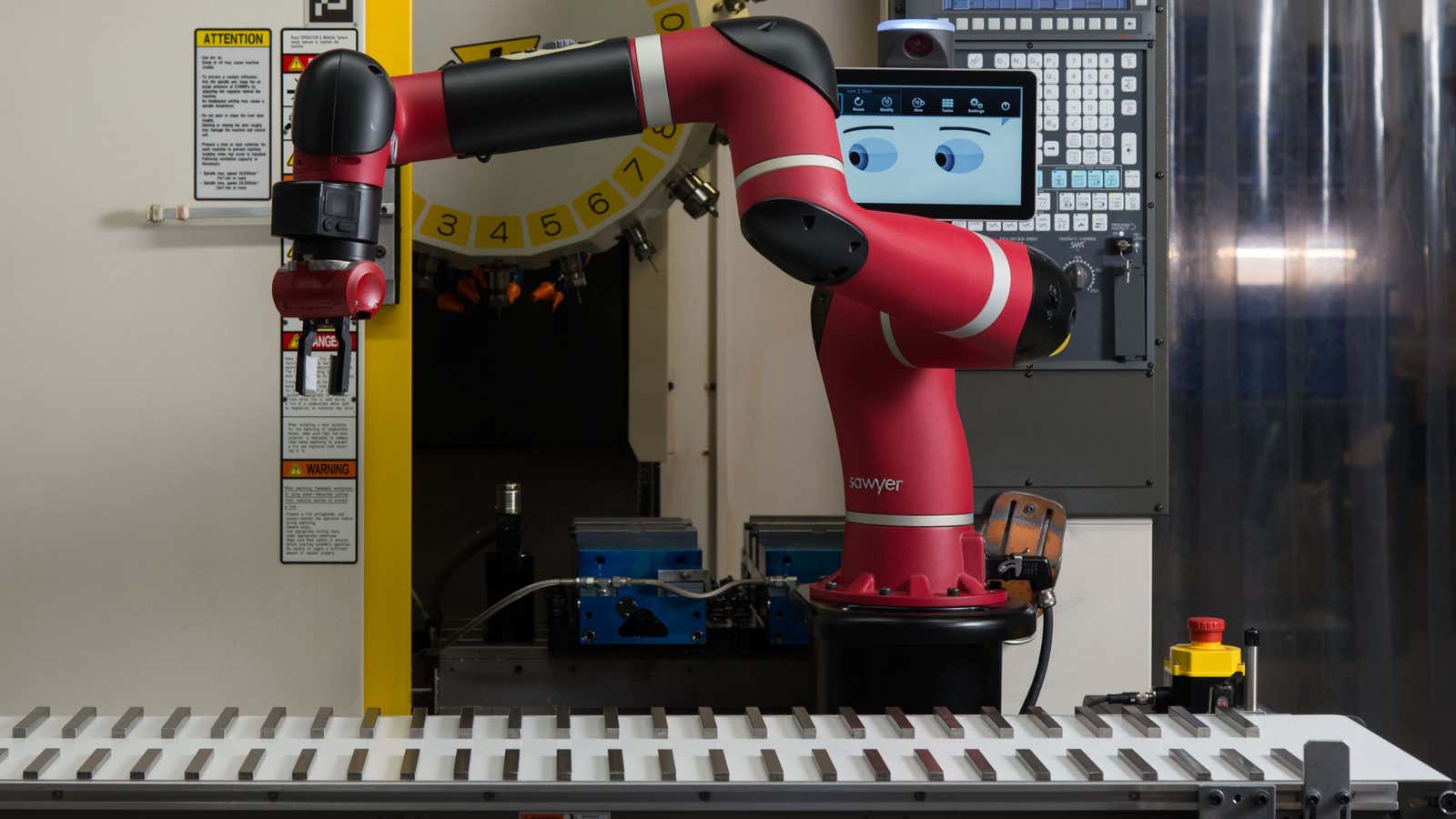

You’d never mistake Baxter and Sawyer for humans, though they were designed to work alongside them. Each has programmable robotic arms that swivel and twist at the joints, bright red plastic exteriors, and rigid mechanical movements.

And yet, despite not imitating humans otherwise, the robots have eyes.

The animated eyes are displayed on what looks like tablet device above the robots’ arms, where a human might expect a head to be. Baxter and Sawyer obviously don’t use the eyes to see or to complete the factory tasks to which they’re assigned. So why do they have them?

“We wanted people to feel comfortable around Baxter,” says Bruce Blumberg, the first user interface engineer at Rethink Robotics, the Boston, Mass. company that created first Baxter and then Sawyer. “Part of feeling comfortable around Baxter was to have just enough cues so people could know what Baxter would do next.”

Unlike most industrial robots, Baxter works in the same spaces as human workers, who can program the robot to do different tasks without using a keyboard, by showing it how to move. The robot handles repetitive assembly line jobs, like moving items from a conveyor belt to a box, at thousands of companies, including General Electric’s lighting division and Steelcase.

As its main safety feature, it stops immediately if it makes unexpected contact with something, such as a human. Workers know this, but that’s not necessarily enough to put them at complete ease working alongside machines. Blumberg explains this in terms of his fear of snakes. “One of the things that makes me afraid of snakes is that I have no clue what the thing is going to do,” he says. “I can’t read snakes. Most people can’t. That’s part of the discomfort. I have no idea what it will do next.”

Because Rethink Robotics robots interact with humans, the company’s engineers studied the cues that humans use to understand each other when developing their interfaces. When humans pick up an object, Blumberg says, “The eyes, then the the head, then the arm all move.” So when Baxter picks something up, its eyes and head move with his arm.

Before Blumberg worked on Baxter, he worked at a videogame company, and he describes the idea of incorporating eyes into a robot’s interface as “right out of animation.”

“Animation is all about the sparsest way to communicate intention,” he says. “We weren’t saying ‘this is a human.’ We were saying, ‘this is a machine that gives you some of the cues we [as humans] use to understand social beings in the world.'”